- Blog

- Logitech g230 mic not working with overwatch

- Viva pinata trouble in paradise online

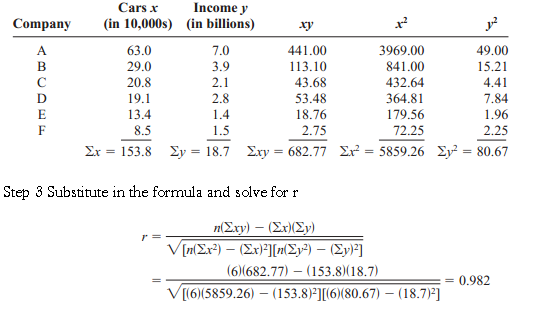

- Simple linear regression equation on phstat

- The amazing spider man full movie soap2day

- Reviews of chhello divas movie

- Clip studio paint version 1-7-4

- How to unblock adobe flash player for super monkey 2

- How to play heroes of might and magic online

- Blaylock wellness report subscription

- Zonet zew2500p driver download windows 7

- Memorex cd labels software free download

- Adobe flash player 64 bits para windows 10

- Apple pages templates gift certificate

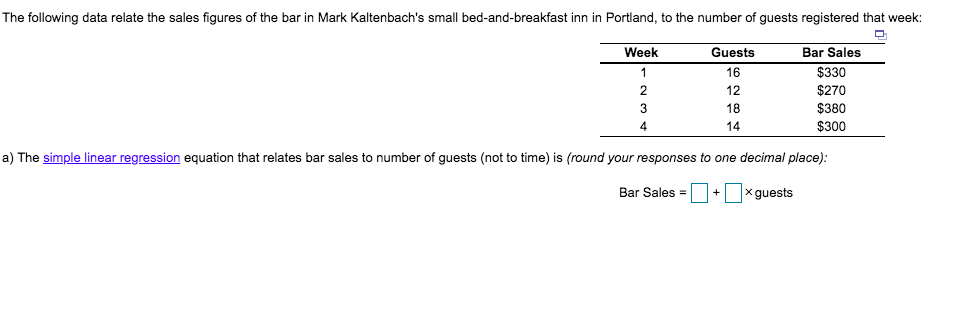

- Simple linear regression equation bar sales

- Free gmod download for windows 7

- Moosend free email sender

- Frank ocean albums 2016

- Heroine full movie watch online free 2012

- Watch 23 jump street full movie online free

- Drake thank me later album artwork

- Makemkv registration key october 2018

- Canon wireless setup utility

- Bitdefender total security 2019 reviews

- How to convert vob to mp4 windows

- Csr harmony bluetooth software not uninstalling

- Blog

- Logitech g230 mic not working with overwatch

- Viva pinata trouble in paradise online

- Simple linear regression equation on phstat

- The amazing spider man full movie soap2day

- Reviews of chhello divas movie

- Clip studio paint version 1-7-4

- How to unblock adobe flash player for super monkey 2

- How to play heroes of might and magic online

- Blaylock wellness report subscription

- Zonet zew2500p driver download windows 7

- Memorex cd labels software free download

- Adobe flash player 64 bits para windows 10

- Apple pages templates gift certificate

- Simple linear regression equation bar sales

- Free gmod download for windows 7

- Moosend free email sender

- Frank ocean albums 2016

- Heroine full movie watch online free 2012

- Watch 23 jump street full movie online free

- Drake thank me later album artwork

- Makemkv registration key october 2018

- Canon wireless setup utility

- Bitdefender total security 2019 reviews

- How to convert vob to mp4 windows

- Csr harmony bluetooth software not uninstalling

The null and alternative hypotheses areĮxample: From the above data determine if the slope results are significant at a 95% confidence levelĭetermine the critical values of t for 8 degrees of freedom at 95% confidence level If b is not equal to 0 there is a linear relationship. The existence of a significant relationship between dependent and independent variable can be tested by whether b is equal to 0. Moreover from the above graph, it is clearly evident that all the values are within ☑0.54 of the line. Thus, most of the points will fall within ☑.96 σ̂ Є i.e 10.54 of the line, hence approx 95% of the values should be in this region. Hence the variability of the random errors (σ ε 2) is the key parameter while predicting by the least squares line.Įstimate variability of the random error σ ε 2Įxample: From the above data, compute the variability of the random errorsįrom the above calculation σ̂ Є is 5.38. Referring the mathematical equation of the line is y=a+bx+ε and also the least square line isĪ random error (Є) affects the error of prediction. For example, If a salesperson completes 15 training modules, then the predicted achieved target sales would be: The least square estimator of a and b are compute as follows:įurthermore, predict y for a given value of x by substitution into the prediction equation. For instance, Least Squares Equation can be used to find the values of the coefficients a and b The least square method means that the overall solution minimizes the sum of squares of the errors made in the results of every single equation. This straight line is also known as best fit line. The least square method determines the position of a straight line or also called trend line and the equation of the line. In general, the dependent variables are demonstrated on y-axis, while the independent variables are demonstrated on x-axis. The method of least squares is a standard approach in regression analysis to determine the best fit line for a given data, It basically provides a visual relationship between the given data points. Response variable is continuous and also residuals are almost same throughout the regression line.Particularly there is no or little multicollinearity in the data.All variables of regression to be multivariate normal.Linear relationship between dependent and independent variable.

Random error (ε-Epsilon) – The difference between an observed value of y and the mean value of y for a given value of x.The mathematical equation of the line is y=a+bx+ε The first step of linear regression is to test the linearity assumption, this can be performed by plot the values in a graph known as scatter plot, to observe the relationship between dependent and independent variable, because if the data is exponentially scattered then there is no meaning to create the regression equation.ĭraw the line which covers the majority of the points, further this line considered as the “best fit” line In fact, the basic difference between simple and multiple regression is in terms of explanatory variables.įor example compare the crop yield rate against the rain fall rate in a season.

Whereas, in multiple linear regression more than one independent variables used to predict a single dependent variable. In simple linear regression, there is only one independent variable used to predict a single dependent variable. Variable or Predictor variable – The variable used to explain the dependent Variable or Criterion variable – is the variable for which we wish to make a In other words, predict the change in the dependent variable according to the change in the independent variable. Linear regression is a statistical technique to estimate the mathematical relationship between a dependent variable (usually denoted as Y) and an independent variable (usually denoted as X).